009: Thinking Fast + Slow

The definitive user manual for your brain.

“Thinking, Fast and Slow” (Daniel Kahneman, 2011) is book about decision-making and behavioral economics. It's long, dense, dry, and totally mind-blowing.

The book completely changed how I approach life / work by giving me concrete ways to think about human tendencies, biases, errors in judgment, and cognitive energy.

In it, Kahneman says that there are two primary mental "operating systems”.

They reflect common patterns in how humans make decisions: How we use available information, assess risk and value, and balance between past, present, and future.

Each system serves a key purpose but can also lead us astray (neither is perfect).

Ultimately, the brain is designed to conserve energy, whenever possible. Many of the mental shortcuts he describes are the direct result of evolution and adaptation. Our species is naturally hard-wired to seek out (and use) these sorts of shortcuts.

Why did Kahneman win the Nobel prize? Well, until recently, most economists assumed that people were rational agents who coolly optimized for self-interest.

The premise was obviously ridiculous!

People make emotional decisions and fall for cognitive illusions all the time (myself included).

Kahneman's key insight =

We do so in consistent, predictable ways.

First, some assumptions:

- Humans have evolved for survival: Food, status, safety, affiliation etc

- Our brain is a fairly recent design. We're still a relatively ~new species

The two systems

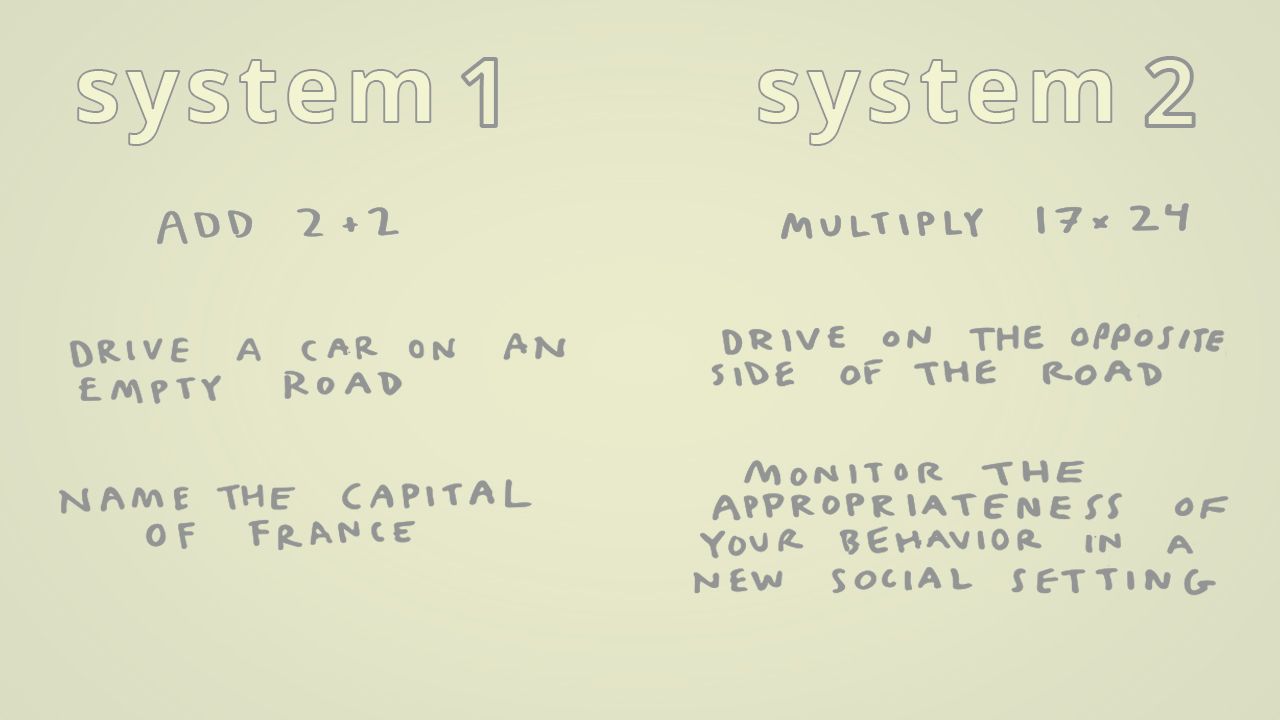

Kahneman names our two human operating systems: System 1 and System 2. These are universal modes of thinking. He describes them as :

Agents with their individual abilities, limitations and functions."

- System 1 = Fast

- System 2 = Slow

System 1

System 1 is instinctive, unconscious, and designed for pattern recognition. It is confident and intuitive.

It is the evolutionary survival aspect of our brain.

It always kicks in first, by default.

It helps us perceive the world, recognize objects, approximate distances and recoil from danger. By maintaining simple models of the world, System 1 automatically makes metaphors and associations.

It sees situations and thinks "I know this, I can handle it".

System 2

System 2 is deliberate, conscious, and designed for complex thinking. It enables deeper analysis and is capable of experiencing some amount of doubt.

It refers to the more recent parts of our brain.

Activating System 2 requires deliberate energy.

It is more cognitively demanding and fatiguing.

It fuels comprehensive analysis and complex reasoning. But it consumes a lot of glucose along the way (fun fact: the brain loves sugar 🍰).

To be human is to use both.

The systems exist in constant dialogue with each other.

But the brain seeks to conserve cognitive effort, when possible.

- When we’re hungry, distracted or tired, we make worse decisions

- It’s hard to hold multiple conflicting thoughts simultaneously

Thus, we falls into paths of least resistance. We're vulnerable to System 1's shortcuts.

Many pages in this book are devoted to describing mental "heuristics" and common biases (more here and here, if you're curious to learn more).

Kahneman writes :

System 1 continuously generates suggestions for System 2: impressions, intuitions, intentions, and feelings. If endorsed by System 2, impressions and intuitions turn into beliefs, and impulses turn into voluntary actions... You generally believe your impressions and act on your desires, and that is fine - usually."

The key word = usually.

Metaphors and associations usually help us make sense of the complex world. They help preserve our valuable (and limited) brain-juice.

But our default mode carries risks:

- We jump to conclusions

- We rationalize away facts that don’t fit a narrative

- We slip into selective attention (or denial)

In a way, this is human nature. Similar to the characters in Battlestar Galactica, humans make decisions emotionally, and justify them rationally.

It's frustrating, but also totally relatable.

It is always easier to spot other people's mistakes than it is to acknowledge our own.

So, what?

If you accept these claims, the world starts to look different.

For me, TFAS changed how I think about habits, marketing, design, memory, public policy etc. It gave me new language to describe anchoring effects, loss aversion, and defaults. And a way to think about overconfidence - the tendency to overestimate our abilities and odds of success.

Once you have names for these things, you start to recognize them everywhere.

Caveat: TFAS is long and written in kind of a dry, academic style. But it's full of powerful ideas - most of which continue to stick with me today.

The best part of reading it?

Realizing that humans are wildly irrational creatures.

The worst: Realizing that I'm a total idiot too.

Further reading :

"Thinking, Fast and Slow"

"The Undoing Project"

"Nudge"

"The Two Friends Who Changed How We Think About How We Think"

Did you read this book?

What did you think?

1/ "Thinking, Fast and Slow" changed how I view the world.

It's a fairly dry, academic book. But it offers a useful way to think about human biases and allocating cognitive energy.

It also helped me understand why I make so many stupid decisions.

Thread 👇 pic.twitter.com/HmUg9kDmrw

Related :